This is from an AMA (Ask Me Anything) session that I conducted on LinkedIn on November 28, 2022. The premise of the AMA was for people to ask me anything about the world of Fractional CTOs or anything else that they could think of.

Arun Aravamudhan

Great answers. A couple of questions: (1) Given that you are billed by the hour, how do you balance recommending long-term / irreversible decisions (eg tech stack or framework decisions) vs short-term tactical? (2) What happens if you get the sense that the existing team doesn’t have the skillset to accomplish what you have set out to do? Do you bring yr own team on board (example: SREs, java/ reactjs developers etc)?

Marc Adler

Thanks for the questions, Arun.

> Given that you are billed by the hour, how do you balance recommending long-term / irreversible decisions (eg tech stack or framework decisions) vs short-term tactical?

This is why I like to get new clients when they have not started any development yet. Because I get to make the technical decisions. And these decisions are not affected by the fact that I am billing by the hour. These are technical decisions that try to carry as little risk as possible, and I always try to stick to well-known, well-tested platforms and patterns.

Your question is interesting because I try not to choose technologies that I will need to be around for a long time in order to make sure that I build a team that knows how to make use of these technologies effectively. For example, I might not suggest … let’s say … quantum computing as a technology to use because (1) I don’t know it and I would have to spend significant time learning it, maybe at the client’s expense, and (2) it’s hard to hire a remote dev shop who has people with these skills. So I try not to lead a founder and a dev team down a path that they cannot recover from.

> What happens if you get the sense that the existing team doesn’t have the skill set to accomplish what you have set out to do? Do you bring yr own team on board (for example: SREs, java/ reactjs developers, etc)?

There have been many times when a member of a remote dev team does not work out on a project that I am leading. This is why I like to use dev shops that have a lot of people. Because there is usually a variety of specialists that float around those companies and can be brought into a project on a temporary basis.

However, I will sometimes bring on a specialist that I might know if I cannot find someone with that skillset in the remote dev shop.

Arun Aravamudhan

Fantastic answers. I love your take on ‘well-known, well-tested platforms and patterns. Boring is better (straightforward and solves the business problem). Example: modern Java as opposed to GoLang for a (boring) web app that needs to scale (resume-driven development wink, wink).

Mark Batty

As the AMA is still open 😉 I’m curious if/how you handle trunk-based dev with large features, feature flags, or something else for example?

More importantly, as a fractional rather than full-time how do you create/develop environments that encourage best-practice/high performance?

Marc Adler

Hi Mark. Yes, the AMA is still open. It’s a living and breathing document, and people can feel to add questions here whenever they want.

Concerning creating the dev environments … I try to suggest my own dev standards to the remote dev team. There are a number of standard things in my toolbox that I have accumulated over the years and I feel most comfortable with that toolset. This includes things like proper source code control management, CI/CD with gated checks, a complete set of automated tests, etc. But I like to try to have that one really senior dev leader on the team who will be the leader from the remote dev shop’s side. And sometimes, these remote dev shops have their own best practices for development. As a fractional CTO (as opposed to a full-time CTO), someones I let the dev team use their best practices once I get to know them and understand them. Being a fractional CTO on limited hours for my client, there is only so much time that you can put into creating the perfect dev environment. Sometimes, you just have to give the team guidelines and loosely enforce them.

And, yes … I do like to use feature flags and various other architectural patterns that make it easy to enable/disable certain capabilities as the app is being tested. I also like to have apps use dynamic configuration, so settings can be changed at run-time.

All this said, I am a lifelong learner and I am always happy to get advice, learn about new tools and frameworks, and overall, make the dev teams as productive as possible.

Mark Batty

Marc Adler thanks, all good points, and lifelong learning is definitely the key.

Those of us with 30+ years of experience know a lot, have done a lot, and have seen a lot – but not absolutely

Richard Meyer

Hi Marc, here’s a question: what’s a sustainable ratio of QA engineers to developers? ie: people who either run manual or automated tests. Obviously, this is a bit of a “how long’s a piece of string” question, but what’s a typical industry standard ratio?

Marc Adler

For the teams that I like to put together, the developers should be writing the unit tests (yeah, good luck with that!). But the number of AUTOMATED QA engineers depends on the number of use cases and the types of platforms the app is designed to run on (web, iOS, Android, headless). For automated tests on mobile devices, you might need some specialists who know those platforms. But generally, I don’t think of ratios of QA to devs. I think of how many automated QA engineers it would take to fully implement tests for all of the use cases in the BRD. Both positive tests and negative tests. Of course, you also need to think about Soak and Stress testing, which might require a specialist and another testing framework.

Scott Davis, Computer Software Executive (retired) & Board Member

Hi Marc,

In my experience, CTO can be a bit of a Jack of All trades role, at times a combination of Chief Architect and/or Engineering leader, technical visionary, product champion, and definer, lead customer engineer for sales, technical marketing, business development and partnerships, technical spokesperson for the company including being the person who convinces customer’s to buy and investors to invest, and ensuring that the engineering team is kept apprised of relevant new technologies and products, just to name just a few! It’s very clear to me how it might be very productive for a startup to engage a fractional CTO for the Chief Architect/VP of Engineering type role, but less so for all the other potential duties. Would like to hear your perspective on these other duties and when a fractional CTO is appropriate or not. Thanks!

Marc Adler

Wow, the former CTO of VMWare asked me a question. I am honored!

All of the various responsibilities that you listed are things that I have done at one time or another, but it really depends on the kind of company that you are the CTO/CA of. For example, for large financial companies, I really do not have any interaction with customers, unless you consider the traders and portfolio managers my “customers”. But, in those companies, I have been responsible for most of the other functions that you listed.

Since many of my CTO-as-a-Service clients are startups, they don’t really have customer bases, and I don’t really have to keep the engineering team apprised of new technologies, since the technical teams are often offshore developers whose sole job is to implement the product. Of course, while I am involved with my clients, I keep my eye on interesting technologies, but I have to be very aware that most of my startup clients have just enough funding to build an MVP and at the early stages, they cannot afford products that have huge license fees.

As far as “technical marketing” goes, I will often be responsible for the technical parts of decks that are presented to potential investors and customers. I will also meet potential investors at times.

If you want my opinion about the most important job of the fractional CTO, it’s to represent all of the interests of the startup founder when dealing with development teams. Every week, I speak to one or more founders who mocked up their idea in something like Figma and threw it over the fence to a development company, leaving all architectural and technical decisions to the development company. Many times, the startup founder has zero ideas of what the development team is doing, what infrastructure they have chosen, what future costs will look like, etc. Sometimes, the founders don’t even know where their source code is being kept. I have said this over and over … a non-technical founder should NEVER go into an engagement with a development company without a senior technical person on their side, representing their interests. This is a function of an fCTO that is really not applicable if you are the CTO of an established company.

Of course, I do all of the technical stuff for my founders, including the architecture, cloud setup and administration, running the dev team, helping to write the product requirements, etc. In a startup, the fCTO often combines multiple functions that, in established companies, you would have separate individuals doing.

Sam Alexander

How do you handle “chicken vs. egg” prospective clients who are looking for a fractional CTO to attach to their venture in order to secure more funding – so that they can hire a fractional CTO? To what extent do you offer assistance (free or paid) before there is funding for the full MVP/project (ie. giving budget ranges, looking over pitch decks, giving them a bio to share, etc)?

Kathy Keating

I will typically give 1-2 hours of conversation for free, then we will work out a plan together based on what the founder/company can afford and what my pay rate is.

I am a Techstars mentor for several of their accelerators (and globally). My time is offered freely to founders in that network when they are in the program. I’ve been known to create a small number of equity arrangements in exchange for advisory services for founders who are out of the program but don’t yet have traction enough to pay for my services.

As Marc Adler says, each fCTO has a slightly different agreement. I tend to operate with a monthly retainer that includes a set number of days worked, on average, per week and access to me via Slack.

Marc Adler

Unlike Kathy, I do not work with equity arrangements. I have gotten burned by a few of those in the past, and at this point in my career, cash is king. I get solicited all of the time from people who might find me on AngelList or through my website, wanting me to be their co-founder in exchange for equity. Although that might appeal to certain fCTOs, it’s something that I just cannot do.

Mark Batty

Hi Marc Adler, as a (full-time/employed) CTO we sometimes work with partners to provide various specialized/expert products/services.

I’m curious if you work with any partners (formal or informal) and if so what are the differences when fractional?

Also any tips you might have; particularly around partnership agreements, fees, etc.; thanks.

Marc Adler

Hi again, Mark. I have a few dev shops that I work with. Since my job is fractional, and it involves multiple clients, my “surface area” is spread over multiple projects at a dev company. Sometimes, this will give me a bit of leverage in terms of getting good prices for my clients. It also sometimes lets me have an easier path when disputes happen.

As an example, let’s say that DevCompany X wants to charge my client $50/hr for a mid-level backend developer. I might say to them “You charged my other client $45 two months ago, and I told my new client what the expected rate would be.” So, because I have multiple clients, I have a lot more recent history and knowledge, and I can use that to help my clients.

That being said, I am not tied into any formal arrangement with any company over the span of multiple clients. If there is another company that can provide an equal or better service to a new client, then I am happy to explore those other possibilities. It’s all about serving the client in the best way that I can.

I also want to stress that I have no financial ties with any of the dev companies. Maybe I will get a box of chocolates for Christmas, but that’s about it.

Mark Batty

Marc Adler thanks, that helps.

Janani Vasudevan

Marc Adler – thank you for doing this. When someone wants to transition to being a first-time CTO, what are some things you’d ask them about their readiness? Wondering what the main differences with being a VP or director of engineering and transitioning to be a CTO

Marc Adler

Hi Janani. Thanks for this very interesting question.

First of all, as I have mentioned, the title of “CTO” takes on many shapes and sizes. You can be a strictly high-level, non-technical CTO … I have seen many CTOs that were really Product Managers … or you can be the senior developer on a two-person dev team … I have seen those as well. It also depends on the organization that you are shooting for … do you want to be the CTO of a mega-corporation or the CTO of a startup? Each situation demands different skills.

I view the CTO position as being more strategic and high-level than the typical VPE position. Whereas a VPE or Director of Engineering might concentrate on getting the features built and keeping the system running smoothly, a CTO is generally focused on more of the planning, budgeting, and exploration. The CTO will also typically face off with CTOs and CEOs from other vendors as partners, will interact with the CIOs and CEOs, and might be present at the executive level and board meetings. The CTO is responsible for every technical aspect of the organization, whereas the VPE is concentrating on the more tactical stuff.

What I said above is definitely a huge generalization, and again, it depends on the kind and the size of the company that you work for.

I am going to point you to an article that I wrote three years ago on the role of the Chief Architect. In some organizations, the Chief Architect is the de facto CTO.

Janani Vasudevan

Marc Adler thank you!

Vinicius 💻⛰ Gravina da Rocha

Curious about your thoughts on NoCode

Marc Adler

I will be honest with you … I have not kept up with the evolution of some of the modern NoCode platforms like Bubble. I know several founders who created their MVPs using Bubble, and some of those MVPs were sufficient to secure more funding. But, when it came time to build the actual application, most of them moved away from Bubble.

Also, using Bubble required a non-technical founder to spend time learning the platform rather than spending time on things that founders should be doing. Maybe this is why there is a healthy cottage industry for freelance Bubble consultants.

Someone else is going to have to comment about the viability of the current version of Bubble to build a production application that is resilient and can scale.

Vinicius 💻⛰ Gravina da Rocha

What stack would you choose for a web app and why? (As a thought exercise, that’s the requirement: a web app.. hehe)

Marc Adler

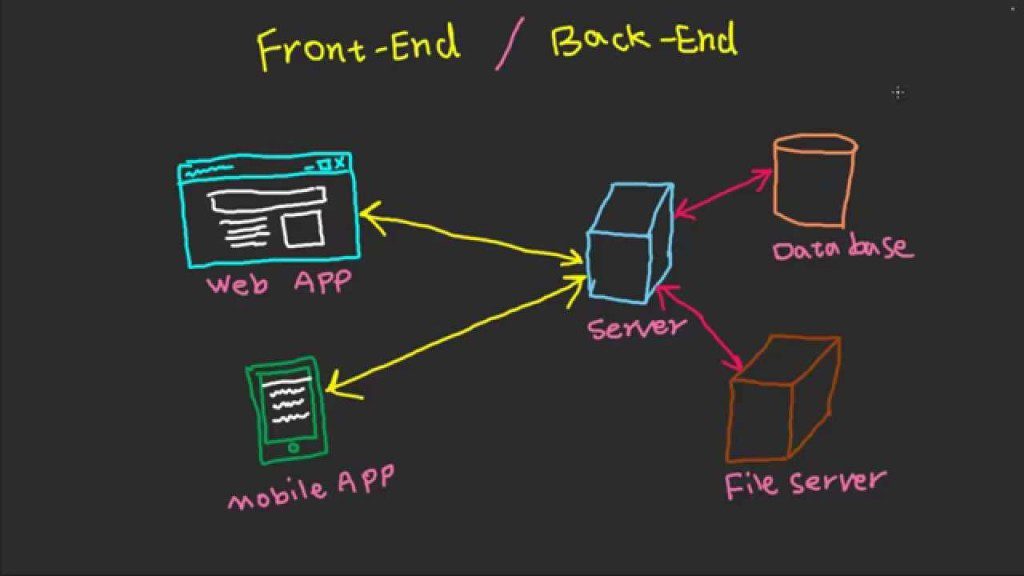

I have to admit that I have always been a big fan of ASP.NET for backend work. Although I also love the Node ecosystem, I have been involved with Microsoft technologies for as long as I remember. And, React for the front end is usually my choice. A simple web app with some amount of API support can easily be done with ASP.NET and React.

Of course, the choice of technologies ALWAYS depends on the requirements of the app. I would never choose any technology without first completely understanding what needs to be built.

Like

John Schnipkoweit

Thanks for doing this AMA Marc, long-time follower, first-time caller.

I saw your mention about not generally coding as an fCTO – which I agree with and generally steer away from – however, on occasion, I have been able to successfully crank out a v0.1 for the right client (and sometimes to just scratch a personal coding itch). When I have done this, I have found it to be an accelerant at learning what actually needs to get built (not surprisingly) and as a result, key to building the right team. Since it sounds like you have worked with zero-to-one startups, have you found a method that works well for teams who have no engineers and limited resources to get a 0.1 version built? Do you bring in known agencies, maybe work in parallel with an accelerator, or bring on contracted engineers? Having done all of those, I understand it could just be specific to the company, but I’m curious if you have found a repeatable pattern that has created a preference for you. Thanks!

Marc Adler

Thanks for the questions, John.

If a startup can afford it, I can code up the initial skeleton of the app. I have been lucky to have been involved with some founders who have been very well-funded, and that gives me the cushion to do some coding. For most of my founders, my hourly rate is too cost-prohibitive for me to do any kind of coding.

When I had version 1 of CaaS, there were a few development firms that I used to go to. The agencies were known, and I personally vetted each and every developer that was on every one of my client’s projects. I would do the architecture and the remote devs would do the coding.

I have stories about the various companies that I will share one of these days. But let’s just say that you really have to be very very careful when you deal with offshore development companies. The old adage of “Familiarity Breeds Contempt” is true!

John Schnipkoweit

Thanks, Marc – really great to read all your insights this morning and I appreciate the time you’ve taken to respond to everyone!

Matt Bye

Great thread. Apols if this has already been asked – how did you get into the fractional work? Thank you!

Marc Adler

Actually, nobody asked this yet, so thank you.

For many years, I had been constantly approached by people who had ideas for applications but had no idea how to build them. Invariably, they would get directed to me. Due to the time commitments that I had from my day jobs, I did not have the time to fully engage with these founders. I would often say “Let’s go to lunch or buy me a beer or three, and I will sketch out how you would hire a dev team and build your app.”

In late 2018, I reached a milestone in my life where I always said that I would retire from the workforce. When I went into “semi-retirement”, all of my friends told me that I would never be able to sit still. And they were right. So I decided to help these founders whom I had previously not been able to help, but this time, I would make it a small business, just to earn some pizza money.

I had no idea that there was such a thing as a “fractional CTO”. I just knew that I could take a non-technical founder from an idea to a full product. So CTO as a Service was born.

I started out with a single client … an EdTech company to whom I had been giving some free advice. I asked them if they knew any companies that might need my help and they said “We would love to hire you”. So I had my first client. Then word-of-mouth started to kick in.

Matt Bye

That’s great – thank you, appreciate you taking the time to reply!

Jeff Barrett

Have you run into fractional roles at other levels, say VP engineering or below? How do you differentiate between fractional CTO and being a “consultant” or “advisor”? Do you worry about executive buy-in for your engagement as much as a fractional CTO as you would as a full-time CTO?

Marc Adler

Thanks for the questions, Jeff.

Yes, there are definitely roles for fCEOs, fCPOs, and fVPEs. There are some firms that specialize in providing these kinds of talented individuals to other companies. Just the other day, on one of the Slack groups, someone was asking about hiring an fCPO (Chief Product Officer), and they got some recommendations.

> How do you differentiate between fractional CTO and being a “consultant” or “advisor”?

That’s a really interesting question. In my mind, the fCTO gets a lot more hands-on than an “advisor”. In my way of being a CTO, I can do the architectures, run the dev organizations, set up and administer the cloud environment, choose the technologies that are used, and more. To me, the “advisor” might play a lot more passive role and a role that is a few thousand feet higher (although I have certainly done that job as well). The “consultant” might be tied to a single client and may look to work 40+ hours per week.

> Do you worry about executive buy-in for your engagement as much as a fractional CTO as you would as a full-time CTO?

In my model and my preferred way of working, I usually work with non-technical founders. And I consider every facet of a technical decision, including costs and risks. So, for me, it’s usually easy for me to get the buy-in of the founder since I am the one who is usually the much-more experienced person.

Jeff Barrett

Marc Adler Thank you for your perspective here. So fCTO is closer to the company day to day and has a deep commitment to the work being provided. This makes sense.

Are you leaving artifacts of your decision processes for your ultimate successor?

Is a part of your engagement model how you will disengage with your client?

Do you set an expectation of helping them hire a full-time CTO when the timing is right?

Marc Adler

Great follow-up questions, Jeff.

> Are you leaving artifacts of your decision processes for your ultimate successor?

Yes, certainly. People who know me know that I am obsessive about documentation and memorializing all design decisions. I usually insist that all of my clients have some sort of Wiki where all technical decisions can be found. I also am heavily involved in interviewing my successors.

> Is a part of your engagement model how you will disengage with your client?

It is not written as part of the contract. But this is something that I talk to my founders about … that if and when they start getting in some revenue or funding, they should look to bring on either a full-time CTO or VPE. But the founder should never be left alone with the remote development company.

Jeff Barrett

Marc Adler is it always a remote dev shop or are you ever hiring engineers into the company? I ask because to me it would feel off to hire a team for another CTO to take over so early in a company’s life.

Marc Adler

I almost always use a remote dev company. And most of the CTOs that have followed me have retained the original dev company. One of the advantages of having an fCTO like me involved is that I don’t let the dev company get away with that many mistakes, so usually, the CTO that follows me has a well-functioning team.

Jeff Barrett

Marc Adler very interesting so are you also hiring in the dev shop or are they often already in place? If hiring in the dev shop yourself is it often the same shop?

Marc Adler

For most of my startup clients, I have to hire the dev shop, but it is up to the dev shop to furnish me with their developers. I do not like it when a dev shop goes to the market to hire developers for my project. I always prefer that the dev shop has the talent on hand … probably from their bench.

Jeff Barrett

Thanks for all of the answers Marc; I really appreciate it!

Mark Batty

Hi Marc, would have joined sooner but I am suffering with Covid 🙁

I assume you are US based, so curious about how much (if any) work you take in UK/Europe; and related to that is how is fractional CTO work different between the US and UK/Europe.

Marc Adler

Ugh, feel better Mark. I had it a few weeks ago, and luckily my case was very mild (just feeling tired for a few days). But another CTO that I was with at the time had symptoms that were much worse, so take care of yourself.

I have had a few clients overseas, most notably in England. I think that the hardest part is my overseas clients getting the wire transfers right 🙂

But, generally, the work is the same between the USA (and Canada) and the European countries. The most difficult thing that you have to navigate is the regulatory requirements in each country. Things that are not kosher to do in the USA could be perfectly legal in another country and vice-versa.

I have to sadly admit that I lost out on a prospective client last week. He was in Oxford, and despite having some great conversations with him, he decided to choose someone that was local to him. This made sense since it was a health tech company, and the person that he eventually chose was familiar with the UK health regulations.

Mark Batty

Thanks for the insight, Marc Adler.

Much appreciated; symptoms not too bad (my wife had it a week before me and was in bed for 2 days); but mine is dragging on 🙁

Support

Dominic Tancredi

Love the AMA Marc.

What’s in your utility belt, as in for strategic decks, technical audits, etc? I’m always curious about what other CTOs carry on them for analyses.

Also is there a project type you most enjoy and also find most challenging?

Marc Adler

Hiya Dom. Great to see you here.

Over the years, I have accumulated lots of artifacts that I can pull out when needed. Technical due diligence lists, case studies, and PowerPoints that I have presented to both executive boards and to investors. Plus, other CTOs contribute and freely share their own lists as well, so there is a wealth of info to choose from.

As far as things that I like to do … anything where I can do some juicy architecture work. I love designing and tinkering around with stuff. There are always interesting design decisions to be made around things like … scaling … where there are ten different solutions that can always be considered.

The most challenging is tackling a new domain that is completely foreign to me … especially when it involves math (ie: quant stuff).

On a non-technical aspect, another challenging part of the fCTO job is dealing with developers who do not speak English well and the differences between a hard-driving cigar-chomping NYC type-A personality and a foreign culture.

Kathy Keating

When you have open space in your calendar for a new client the pull to take on new work is real. What are your criteria for accepting or rejecting new work?

Marc Adler

Hello to Kathy, one of my favorite CTOs!

I freely admit that I am very bad at saying “no” to potential clients. Everyone seems to have a need, and I am flattered that they would come to me for help.

That being said, there are a few limiting factors that I have on deciding to take on new clients.

1. Time. Right now, the most precious commodity for me is free time. After almost 40 years in IT, I want to do other things in my life besides CTO-ing. So, I limit the amount of time I spend with all clients to 20 hours a week max. Therefore, I will not take on any clients that want me full-time. I made an exception with XP (where I took a full-time job), but that was a situation I could not say “no” to.

2. Social mission. I will not take on clients that have a mission that is contrary to my social values. I will not take on anything that is related to cryptocurrencies. And now, I will not take on any work that involves capital markets. However, I *will* give priority to startups that want to improve any aspect of our lives.

3. Tech. As I mentioned in a previous comment, I am tending toward rejecting all jobs that involve “rescues”. It’s difficult to mine the depths of other people’s mistakes. I would like to be involved with a client when it is totally greenfield.

4. Due Diligence. I actually enjoy technical due diligence work. I get to dive into a new domain very deeply for a few weeks, and I do not have to worry about cleaning up other people’s mistakes. I just have a responsibility to the investor that hired me. So I will give preference to DD work.

Kathy Keating

Marc Adler thanks! You’re one of my favorite CTOs as well!

I tend to be really good at rescues which is why I’ve done so many. I think in the future if I do another rescue, I’ll make it an interim situation.

I’m prior times I would do a rescue, and when the rescue is complete and back on track – I’m left at a company that doesn’t match my values (do good in the world).

I appreciate your ethos there. It’s nice to be at a point in my career where I can be more selective.

Kathy Keating

I really appreciate your sage advice, Marc! this AMA is so informative!

Mark Robinson

What have you found to be the most successful approach when selling your CaaS service to larger clients, who typically don’t engage with smaller companies/single-seat consultancies? From the posts I’ve seen, this doesn’t seem to have been too much of an issue for you.

Marc Adler

Thanks for the question, Mark. I wouldn’t say that I have been totally successful at landing larger companies as clients, but I haven’t been a failure either because I tend to stay away from larger companies. The retainer model that I have (you pay me upfront for a certain number of hours per month and you draw down from those hours) is not the billing model that most larger companies can deal with, and one of the things that I do not want to do is rack up thousands of dollars in receivables and start chasing after the money with companies who have a net-90 day policy for payment (just look at how Elon is currently stiffing his vendors). I also tend to stay away from larger companies because of the friction that is inevitable when trying to implement something quickly.

That being said, the larger companies that I engaged with (ie: XP) were the result of a personal recommendation or a personal contact.

All said, you need to ask yourself a question… would I rather work for a small single-person startup and charge $X per hour, or would I rather help a large company and charge $X*3 per hour? For me, at this stage of my career, helping small startups is more important. Otherwise, I might still be with XP 🙂

Like

Kunjie Qian

Hi Marc, how do you teach yourself new subjects? What is your approach to judgment and decision-making?

Marc Adler

Great questions, Kunjie.

Teaching myself new subjects … blogs and YouTube! I find that, as I get older, I do not have much patience to sit down with a good textbook like I used to. I subscribe to tons of blogs and depending on the subject, you can always find decent intro-level material on YouTube. As far as tech stuff goes, I still code, so I find myself firing up my favorite IDEs and writing small proofs of concept in order to dive into something that I need to learn.

As far as judgment and decision-making … I am going to talk about what I do now as opposed to what I did 20 years ago. I find that getting feedback from peers is very important, and I rely on some Slack groups that have experienced people on it. I often will bounce an idea off of the folks in a certain Slack channel, and I am not only guaranteed to get some good feedback, but I can often find people who have gone down the same route as I have.

Kunj Qian

Marc Adler Thank you, Marc!

Viral (Veer) Lalan

Couple of questions: How do you charge a client for providing fractional CTO services? Also, in your experience what length (in time- weekly, monthly, etc) of retainers work the best for this kind of service?

Marc Adler

Thanks for asking this, Viral.

In my early days of CaaS, I would charge different amounts to different clients. I would always charge on an hourly basis because one of the mantras of CaaS was that a client could use me as much or as little as they wanted to. They could use me for a few hours per month, or they could actually book up to 10 hours per week.

The sliding scale would be based on the type of company it was, and the mission that it would be involved in. I tended to give the lowest rates to self-funded founders who had a mission that was in line with my own social values. Larger companies would be charged a higher rate. There would also be a standard fee for due diligence exercises.

In CaaS, the retainers would be paid each month and the founders would draw down from that retainer, much like you do when you retain a lawyer. As the project would get more mature, the founders would typically start cutting back on the hours a bit, making my exit a very gradual one. I found that staying 6 months with a founder is a good measure of time. Sometimes, I would be asked to stick around as a board member, or I would be paid out-of-band to meet with an investor.

Viral (Veer) Lalan

Marc Adler Thanks for the answer to the questions and sharing your wisdom. Appreciate it.

John O’Sullivan

OK – I’m in! Mainly because I may be copying your playbook in London in a few years. Q1: proportion of inbound vs outbound sales? How much work from existing contacts from your professional life in trading tech, versus new contacts from events, trade shows, and word of mouth? Q2: the shape of typical engagements. Rescuing failed projects vs tech due diligence for investors vs hiring and team build-out for non-tech founders. Or startup vs established SME vs corp clients. Or hand on engineering vs strategy advice? Length of engagement, how many hours per week?

Marc Adler

Hi John. Great to have you here, and looking forward to you starting the London branch of CaaS 🙂

> How much work from existing contacts from your professional life in trading tech, versus new contacts from events, trade shows, and word of mouth?

Actually, except for XP Investments (which was a client, thanks to a Quant that I worked with at Citi many moons ago), none of my clients came from trading tech. Although my long background in trading tech helped with my bona-fides.

I never did cold outreach to prospects. As you know, CaaS was a part-time, post-semi-retirement gig for me. So, I did not want to actively grow the business. So things like events, trade shows, etc were avenues that I did not pursue. I also had lots of offers from salespeople to help me grow my business, and I refused all of them.

All of my contracts were from either word-of-mouth or from my CTO0-as-a-Service website. I used to be one of the only “CTO-as-a-Service” people out there, so it was easy to find me through a Google search. But now everyone wants to be a fractional CTO, so it’s a bit harder to get noticed through Google searches.

> shape of typical engagements. Rescuing failed projects vs tech due diligence for investors vs hiring and team build-out for non-tech founders. Or startup vs established SME vs corp clients. Or hand on engineering vs strategy advice? Length of engagement, how many hours per week?

I definitely had my share of rescuing failed projects. As I have mentioned many times, non-tech founders should never go into a venture without a senior-level tech person by their side. So, I had my fair of “I gave the job to a company from country XYZ and they delivered a piece of crap to me.” As I got more clients, I tended to deflect those kinds of jobs to other CTOs who wanted the work.

My sweet spot was starting with a founder from Day Zero. Helping them shape the product, hiring the development team (including interviewing every candidate), helping them write the product requirements, etc. That’s what I really prefer rather than coming late into the game and figuring out someone else’s work.

Marc Adler

The length of engagement would typically be 6 months. Then I would encourage the founder to “fire me” and try to find a full-time CTO if their finances would allow it. I always limited my hours to 20 per week, and the hours would usually be heavier at the start, when I am defining the architecture, choosing the dev team, setting up the cloud, etc. Then it becomes a routine of a few meetings per week with the founders and the developers, and often, with the executives of the outsourcing company.

Marc Adler

I also did a bunch of tech due diligence work. It would usually take about 3 weeks to properly evaluate a company. Not 3 weeks of full-time work … but I feel that I had to give the proper amount of thought to my work because there was money at stake and a false negative could have consequences for the acquiring firm.

John O’Sullivan

Marc Adler cheer Marc!

Claus Höfele

Thanks for doing this AMA!

My question: different companies need different types of CTOs. How do you match your skills to the companies that you work for?

Marc Adler

Hi Claus. Thanks for asking this interesting question.

I consider myself to be very much of a generalist, so I can take on most any CTO duties. However, there are domains that I might need to learn in order to successfully engage with a client, or alternatively, I try to bring on a specialist for the engagement (if my client’s budget will allow it). For example, in my CaaS work, I taught myself things about healthcare, EdTech, real estate, and more.

As far as “different types of CTOs” … I usually like to operate at a high level. I am not the kind of CTO that a company would hire to do coding (although I am very much still capable of that). You are so right in saying that there are different types of CTOs, and I pitch myself as a CTO that will operate at a 1000-meter level, although I still get my hands very dirty with architecture.

Mikael Schirru

Hello Marc! Sorry if one of the first questions is not related to the world of CTOs.

As an experienced IT professional, would you rather be a generalist or a specialist in some field (or even language) today?

Marc Adler

Hi Mikael, you can ask me anything, even if it’s not related to the world of CTOs.

I have always had good success at being a generalist and being able to teach myself new domains when the need arises. And I have been fortunate to be able to take some sabbaticals in order to learn new technology. For example, in 2017, I took a few months off to write an Uber clone because I wanted to dive into Scala and Akka.

That being said, there is a lot of demand out there for low-level C++ coders, especially in high-frequency trading companies. There is/was also demand for specialist languages like Q (used for the KDB+ database). In my opinion, if you are passionate about any special domain, then it’s good to do a really deep dive into that domain. But you also have to make sure that you are not “typecast” into a certain specialty (ie: “Oh, that Mikael … he only knows C++ and trading systems, and we cannot consider him for anything else.”)

Oswaldo Fratini Filho, MSc

I thought to talk with you about Marimba… 🙂

My father is a luthier and makes a lot of Cuíca nowadays! I will take a picture and send it to you here.

I am a specialist in C++ / Qt and have so good experience in hardware production/maintenance today I have been working with BLE (Bluetooth Low Energy). I am studying radio and all software stack.

The dollar is very expensive in Brazil and electronic components are missing in the world.

Do you see with good eyes a company that makes reverse engineering, recycles electronic components, and offers new electronic products with innovative software to its clients in this scenario?

Do you understand that this global and economic scenario in Brazil should remain?

I think about being a CTO of a startup to make this.

Marc Adler

Hi Oswaldo. I have been studying classical guitar for one month, and I would love to see your father’s work!

Your idea sounds very worthwhile. Of course, I know how expensive software and electronics are in Brazil. If you are able to solve all of the issues with Brazil’s legal and regulatory system, I think that you can think about pitching your idea and getting some startup funding. Is there an organization like TechStars in Brazil? There should be organizations that will assist local startups.

You should check out the YCombinator Startup School for some ideas about how to proceed.